Samsung, SK hynix, Hyundai announce landmark deals with Nvidia at GTC 2026

-

- LEE JAE-LIM

- [email protected]

![Samsung Electronics' booth, which displayed unveiled seventh-generation high bandwidth memory, HBM4E, for the first time, is bustling with visitors at the Nvidia GTC global AI conference in San Jose, California, on March 16. [SAMSUNG ELECTRONICS]](https://koreaseafood.online/data/photo/2026/03/17/45e8c623-cee6-418f-8720-3018100f1113.jpg)

Samsung Electronics' booth, which displayed unveiled seventh-generation high bandwidth memory, HBM4E, for the first time, is bustling with visitors at the Nvidia GTC global AI conference in San Jose, California, on March 16. [SAMSUNG ELECTRONICS]

Korea’s industrial heavyweights — Samsung Electronics, SK hynix and Hyundai Motor — announced a series of landmark deals with Nvidia at its GTC 2026 developer conference in San Jose on Monday, placing Korean manufacturers in the spotlight for their growing ties with the U.S. company on AI infrastructure.

Samsung Electronics received global spotlight at the event due to Nvidia CEO Jensen Huang’s shout out, in which he said the chipmaker is “cranking” production of Groq’s latest AI chips, known as the Nvidia Groq 3 LPU.

“I want to thank Samsung, who manufactures the Groq 3 LPU chip for us, and they’re cranking as hard as they can,” he said.

LPU refers to language processing units designed for AI inference. Huang added that the chip is already in production and is expected to begin shipping in the second half of the year, likely around the third quarter.

![Nvidia CEO Jensen Huang speaks during an Nvidia conference focusing on artificial intelligence in San Jose, California, on March 16. [AP/YONHAP]](https://koreaseafood.online/data/photo/2026/03/17/31b111fa-9a5f-4aa1-9515-90238c008ff9.jpg)

Nvidia CEO Jensen Huang speaks during an Nvidia conference focusing on artificial intelligence in San Jose, California, on March 16. [AP/YONHAP]

Groq’s chips are currently being produced at Samsung’s Pyeongtaek campus in Gyeonggi using the company’s 4-nanometer process. It remains unclear, however, whether production will eventually move to Samsung’s foundry plant in Taylor, Texas, once it begins mass production next year.

Although rumors had circulated for months, this marked the first time Huang publicly confirmed that Samsung’s foundry business is manufacturing Groq’s newest AI chip. Huang also said the inference accelerator rack, the Nvidia Groq 3 LPX that is powered by 256 Groq 3 LPUs, can speed up inference workloads by as much as 35 times when paired with the Vera Rubin architecture.

Nvidia invested $20 billion in the U.S. startup in December of last year to license Groq’s inference technology and recruit talent from the company.

Samsung also unveiled its seventh-generation high bandwidth memory, HBM4E, at the event’s booth ahead of planned sample shipments in mid-2026.

![Nvidia CEO Jensen Huang poses for a photo with Samsung executives at the Nvidia GTC global AI conference in San Jose, California, on March 16. [REUTERS/YONHAP]](https://koreaseafood.online/data/photo/2026/03/17/c0ba0358-7cdb-4e0c-92d0-878e4b5c0d11.jpg)

Nvidia CEO Jensen Huang poses for a photo with Samsung executives at the Nvidia GTC global AI conference in San Jose, California, on March 16. [REUTERS/YONHAP]

It marked Samsung’s first public showcase of the next-generation memory technology ahead of mass production and signaled that the company is already looking ahead to supply the advanced memory to the U.S. company alongside rivals such as SK hynix.

The HBM4Es are built on 10-nanometer-class 1c dynamic random access memory (DRAM), referring to Samsung’s advanced memory manufacturing node, with a 4-nanometer logic process used to control memory operations. The chips can deliver processing speeds of 16 gigabits per second (Gbps), a 36 percent improvement over the previous generation’s 11 Gbps and double the performance of the current industry standard of 8 Gbps. The chips also achieve 4.0 terabytes per second of bandwidth, referring to the total amount of data the memory can transfer each second.

Its sixth-generation HBM4, which have already begun mass production, will be integrated into Nvidia’s Vera Rubin platform.

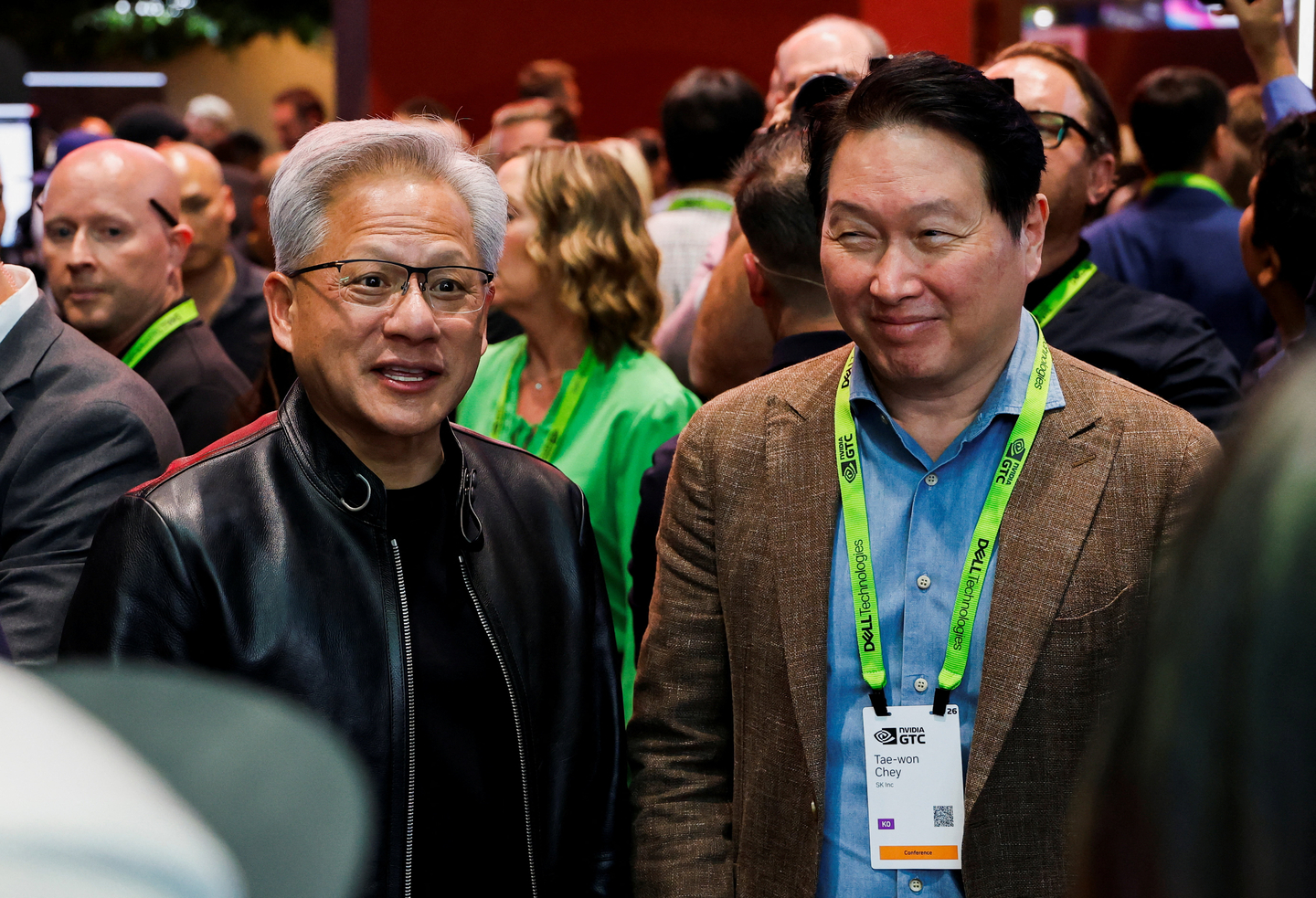

![Nvidia CEO Jensen Huang and SK Group Chairman Chey Tae-won attend the Nvidia GTC global AI conference in San Jose, California, on March 16. [REUTERS/YONHAP]](https://koreaseafood.online/data/photo/2026/03/17/1e78af9a-358c-406b-bb7f-9246d60a591d.jpg)

Nvidia CEO Jensen Huang and SK Group Chairman Chey Tae-won attend the Nvidia GTC global AI conference in San Jose, California, on March 16. [REUTERS/YONHAP]

SK hynix’s booth featured HBM4 that is still undergoing final testing from Nvidia. SK Group Chairman Chey Tae-won and SK hynix CEO Kwak Noh-jung attended the event, with the chairman telling reporters on the sidelines that he is considering U.S. stock market listing through an American depositary receipt (ADR) program.

“I think it would allow the company to gain exposure not only to Korean shareholders but also to U.S. and global investors,” he said. Chey also forecast the global memory shortage would last until 2030, saying the supply crunch stems from a lack of wafers.

“Producing HBM requires a large number of wafers, and securing additional wafer capacity takes at least four to five years,” he said, adding that SK hynix plans to announce a new plan to stabilize DRAM prices soon.

Nvidia CEO Jensen Huang and SK Group Chairman Chey Tae-won attend the Nvidia GTC global AI conference in San Jose, California, on March 16. [REUTERS/YONHAP[

For Hyundai Motor, the partnership expands collaboration with the U.S. company on autonomous driving technologies and robotaxi services.

The automaker plans to apply Nvidia’s Level 2 and higher autonomous driving technologies to selected vehicle models. Over the longer term, the two sides aim to extend their cooperation to Level 4 robotaxis. The effort will be led through Motional, a U.S.-based autonomous driving joint venture majority-owned by the Korean automaker, to further develop Level 4 robotaxi technologies.

Hyundai also plans to use Nvidia’s Drive Hyperion architecture for the initiative. The platform will help build a data feedback loop that includes collecting driving data such as video, language, behavior inputs, as well as training AI models, applying them to real vehicles, and continuously improving data quality.

BY LEE JAE-LIM [[email protected]]

with the Korea JoongAng Daily

To write comments, please log in to one of the accounts.

Standards Board Policy (0/250자)